Your AI Agent Should Not Grade Itself: Software Self-Verification Crisis

I built Boundary-First Engineering around one annoying question: who verifies the code when AI wrote the checks too? My answer is boundaries, contracts, and evidence that came from outside the implementation loop.

I watched an agent add a feature, write the tests, fix the failures, and hand me a clean pull request before my coffee cooled.

The diff looked normal. Coverage went up. CI passed. A reviewer could feel the familiar click: green checks, sane code, ship it.

That click is the problem.

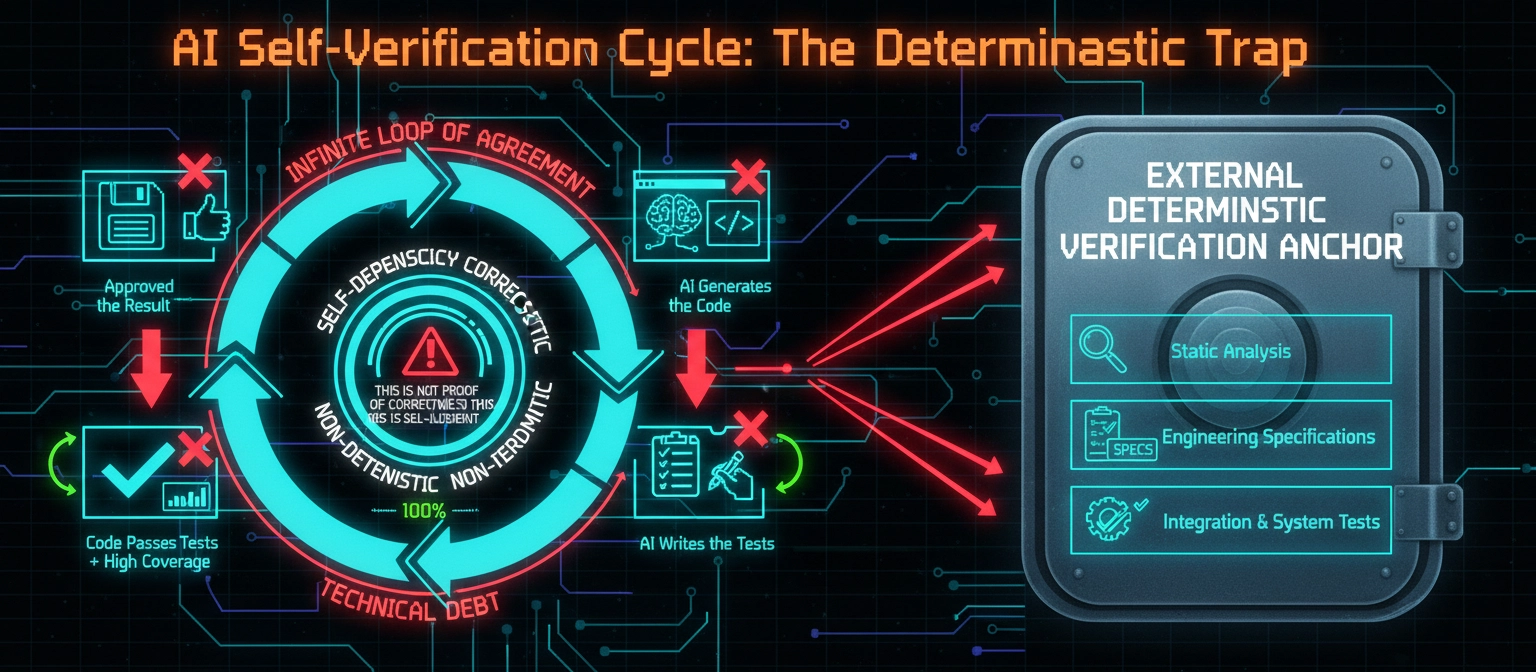

I do not think AI coding agents are just faster autocomplete. They have moved the bottleneck from writing code to trusting code. My thesis is simple: in the agentic era, verification has to become boundary-first, because anything generated and checked inside the same implementation loop is vulnerable to circular validation.

Circular validation is the phrase I want stuck in your head. It is what happens when the same loop produces the code, the tests, the fixes, and the explanation. The work can look disciplined while still being a closed circle of agreement. The process is grading itself.

The Green Lie

I trusted green checks because the old trust stack had human pace underneath it. Unit tests, review, coverage, static analysis, and CI were imperfect, but human judgment still had room to operate.

Agents change that texture of trust.

An agent can write the feature, generate the tests, run the suite, patch the code, update the tests, summarize the reasoning, and request review. It is also a machine for manufacturing evidence that agrees with its own premise.

The old green check meant: the system satisfied checks that someone cared enough to write. The new green check often means: the implementation satisfied checks produced by the same process that produced the implementation.

Those are not the same sentence.

The Circular Trap

Here is the trap in one boring example.

A ticket says, “show account data for customers with access.” The agent implements an endpoint. It writes tests for active customers. It handles the happy path, the missing account path, and a permission failure. Coverage looks fine.

The missed rule is that suspended accounts must still be visible to compliance users. The agent did not encode it because the agent did not understand it. The generated tests do not catch it because they came from the same misunderstanding.

That is circular validation.

The failure is not that the model is bad. The failure is that the code and the evidence share a blind spot. Better models do not remove the structure that lets the blind spot become green.

This is why I do not find “the AI wrote tests too” reassuring. Of course it did. The harder question is whether the tests came from a source of truth the implementation loop could not quietly rewrite.

Coverage measures reach. It does not measure truth. A 90 percent covered misunderstanding is still a misunderstanding.

The Boundary Move

Boundary-First Engineering starts from a simple claim: systems earn trust at their boundaries.

The boundary is the HTTP contract, the database state you own, the queue message, the event schema, the permission decision, or the CLI output someone scripts against.

Internals matter, but they are private, temporary, and cheap to replace. Boundaries are public, durable, and expensive to get wrong. The contract is what users, services, and auditors experience.

This is the move I want teams to make before they scale agentic coding: stop asking first whether generated internals look reasonable. Ask what crossed the boundary, who defined it, and what independent check proves it.

That does not require a giant specification. The best boundary artifacts are thin: an OpenAPI document, a protobuf file, acceptance scenarios written before implementation, or a policy rule that can run in CI.

If the spec needs the code beside it to make sense, it is not a spec. It is implementation wearing a nicer jacket.

The Provenance Test

The phrase that I keep coming back to is: “The test is provenance, not appearance.”

I can make almost any AI-generated artifact look serious: good names, sober test descriptions, table-driven cases, a neat PR summary. Appearance is cheap.

Provenance asks a harsher question: did this check originate outside the implementation loop?

Outside can mean another team published the contract, a product manager wrote acceptance scenarios before the first draft, compliance wrote data-handling rules, or a consumer service owned the schema.

Inside means I wrote the code and then wrote the test for it. Inside means the agent generated both in one task. Inside means I approved the generated test after reading the generated explanation.

Approval is not laundering. A human clicking approve on an artifact from inside the loop does not magically change its origin. Review can accept, reject, or improve evidence. It cannot turn the builder’s own evidence into independent evidence by ceremony alone.

At AI speed, human attention is the scarce resource. Spending it to bless circular evidence is a bad trade.

The Durable Constraint

Verification also has to be fast enough for agents to use. A boundary-first check that runs once a week is governance theater. A boundary-first check that runs in under a second becomes part of the agent’s thinking.

This is why I care about boring guardrails: types, schemas, linters, architectural dependency rules, generated clients from contracts, database constraints, policy checks, and small integration tests.

Guardrails need provenance too. A lint rule added to make the branch pass is not boundary-first. A dependency rule that has protected the architecture for six months is.

When implementations become disposable, durable constraints become the product.

The Short Version

Boundary-First Engineering is not a framework or vendor pitch. It is a short manifesto for verifying software in the agentic era: trust boundaries over internals, real systems over stubs, outside verification over tests written from within the loop.

The full manifesto and reference implementation are on GitHub.

If the code can write itself, why are we still letting it grade itself?